ATI introduced its Radeon line of graphics adaptors shortly after

Nvidia had released their all conquering Geforce 2 GTS chipset. Though the

Radeon line subsequently diversified, the original intent was to deliver a

chipset which could compete with the GTS in terms of sheer performance, but also

scale better from 16 to 32 bit colour without losing much in terms of frame

rates. More or less, it worked.

The original Radeon DDR card, though slower than the GTS in lower

resolution benchmarks, was quite close in performance, and in high resolution

(1024x768 and above) 32 bit colour, actually had a considerable advantage. For a

brief period, the Radeon 32MB DDR was the fastest card available for gamers with

tricked-out systems.

Unfortunately for ATI, Nvidia soon brought out its GTS-enhancing

Detonator 3 drivers and introduced the GeForce 2 Ultra chipset, which left the

Radeon DDR in the dust at all resolutions. ATI's only response was a 64MB

version of the DDR card, a feat which had already been matched by the GTS

chipset. Predictably, this did not make the Radeon much more competitive, and

Nvidia resumed its domination of the 3D chipset market, despite ATI's almost

covert release of the slightly beefed up Radeon SE.

Move forward to this year,

and Nvidia's release of the Geforce3 chipset with support for DirectX 8.0 and

'revolutionary' programming flexibility for graphics coders. ATI is now a generation

and a half behind its competitor in the graphics accelerator arena... you know

this can't last.

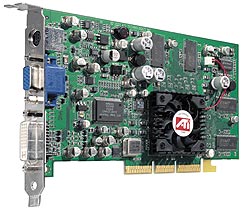

ATI's newest graphics chipset, formally known as the R200, will be first seen

in the 8500 line of cards. Comprising the Radeon 8500, a straight 64MB gaming

card designed to compete directly with the GF3, and the 8500DV, a successor to

the very successful Radeon all-in-wonder. The R8500 will also add built-in

firewire support into the mix.

Again ATI is releasing its new chipset after Nvidia has had

time to build up support for theirs. This time though, ATI's card has a better

hook. The 8500 chipset is the first chipset to be fully compatible with Microsoft's

soon to be released (with Windows XP) DirectX 8.1 API, and is armed with at

least one unique feature which modern games should be able to actually use (once

suitably patched).

The Radeon 8500 Chip

The Radeon 8500 GPU is a .15 micron process, 60 million

transistor chip with 4 rendering pipelines, each with two texture units. This

setup differs from the original Radeon considerably, doubling the amount of

pixel rendering pipelines while dropping the third texturing unit on each

pipeline that the original Radeon sported. While the rendering pipeline setup of

the Radeon 8500 is superficially identical to that of the Geforce 3, the

difference comes with the 8500's full hardware support for DirectX 8.1's pixel

shader 1.4 spec., which will allow it to combine three of its four pipelines to

render a pixel with six different texture samples in a single pass (using 3

clock cycles).

Though the Geforce 3 has the same amount of rendering

pipelines and texture units, it currently supports only pixel shader 1.3 and

lower, limiting it to a maximum output of 2, 4 texture pixels in a single pass

(2 clock cycles), a feat which the 8500 GPU is also capable of. The Geforce 3

will require an additional rendering pass to complete a pixel with more than

four texture samples.

As with the original Radeon's adoption of a third

texturing unit per pipeline, it remains to be seen whether support for the

8500's ability to add an additional two textures per pixel per pass will

actually be instituted by developers in the 9 months or so before the inevitable

Next Generation Chipset comes out. More on this later in the article.

The 8500 chip will be clocked at 250MHz, while the DDR

memory will run at 275MHz. This gives the 8500 chip theoretical fill rates of 1

Gigapixel (250MHz x 4 pixel rendering pipelines) and 2 Gigatexels (250Mhz x 4

pipelines x2 for two texturing units per pipeline). Compare this to the 366

Megapixel/1.1 Gigatexel numbers of its predecessor and the 800 Megapixel/1.6

Gigatexel of the Geforce3 if you like that sort of thing. Keep in mind that as

3D games become more complex, these numbers will not add up to equivalent

real world performance.

The memory controller is a slightly upgraded

version of the original Radeon's, a 128 bit DDR interface. The only difference

is that instead of fetching 128 bits of data twice per clock cycle, the

controller now waits for 256.